Calum Mackenzie

q has both the symbol and character atomic datatypes. Both symbols and lists of characters (commonly referred to as strings) are used for working with text data. The two appear extensively throughout q code but in any given situation there are reasons for strongly favouring one over the other. This post will start by covering those reasons and then go on to consider methods besides these for saving textual data to disk that can be more efficient and without the shortcomings of either datatype.

Symbols and Strings are Stored Differently

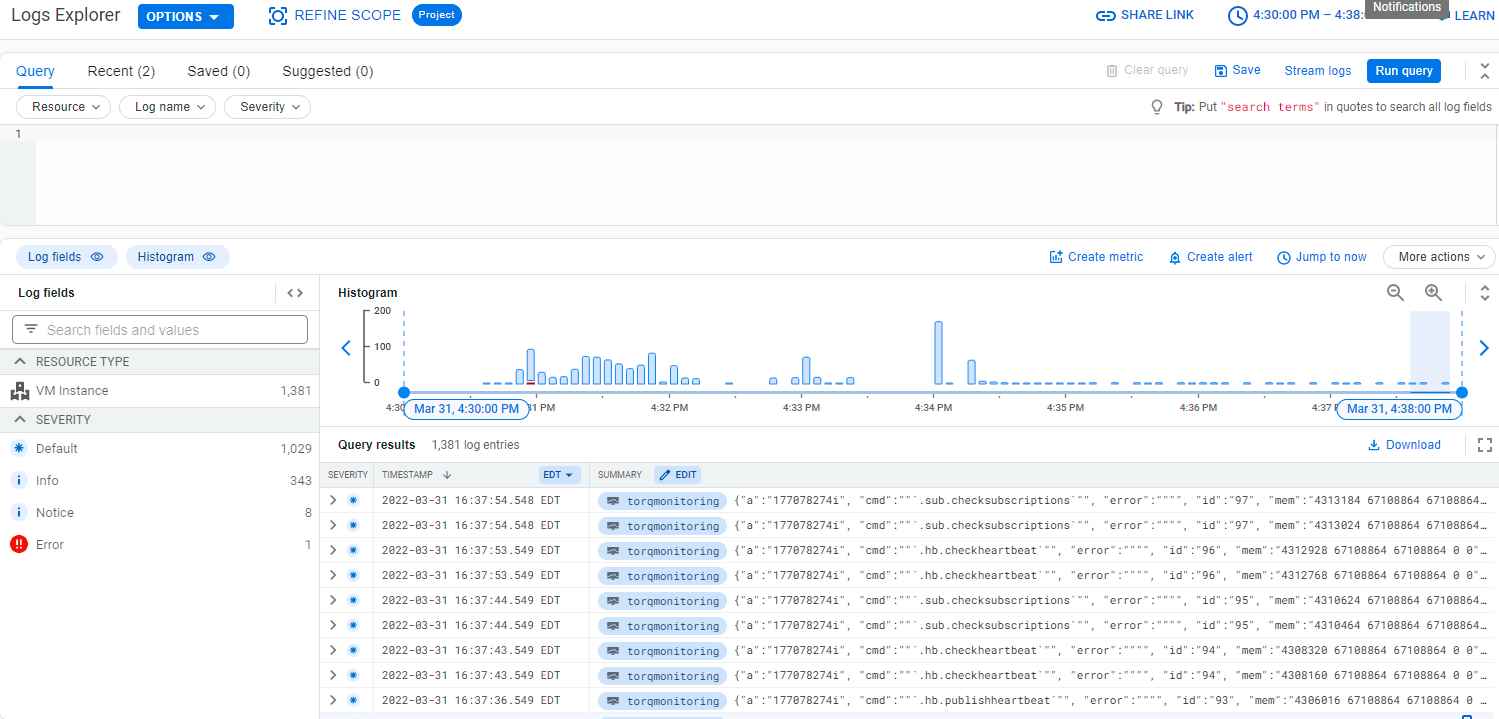

As a jumping off point imagine using a select query to filter a subset of data from a table. The artificial data in this example is created by generating 1,000 unique, random symbols of length eight and then from those taking 1,000,000 random draws:

q)syms:1000000?-1000?`8We can put this and its corresponding char form into a table (note that this table has no attributes on it):

q)show t:([]str:string syms;syms)

str syms

-------------------

"dpjdfoph" dpjdfoph

"nmhbooij" nmhbooij

"aedpgpon" aedpgpon

... Extracting the subset of interest using the symbol column vs. the char column:

q)\ts select from t where syms in `dpjdfoph`nmhbooij`aedpgpon`ohmdaecl`ogcejddh

11 9437792

q)\ts select from t where str in string `dpjdfoph`nmhbooij`aedpgpon`ohmdaecl`ogcejddh

84 16778432This order of magnitude gulf is down to an underlying difference in how the two datatypes are represented in memory. Symbols are interned strings: consequently, comparisons involving them are between their numeric pointers and so are atomic. On the other hand, comparisons between two char lists can involve, at the worst, as many operations as there are characters making up one of the vectors. Furthermore, a list/column of char vectors is a nested structure with each string (in general) varying in length. The impact this has on memory can be sizeable due to any list having a 16-byte overhead and also an 8-byte pointer for every element it contains. Combined with kdb+’s implementation of the buddy memory-allocation algorithm the requirements for the raw data can be blown up significantly. See this post for further details.

Apart from time efficiency there is also storage space to consider. Saving down without using any sort of compression:

q)`:hdb/tu/ set .Q.en[`:hdb;t];

q)\ls -lh hdb

"total 16K"

"-rw-rw-r-- 1 cmackenzie cmackenzie 8.8K Apr 28 09:05 sym"

"drwxrwxr-x 2 cmackenzie cmackenzie 4.0K Apr 28 09:05 tu"

q)\ls -lh hdb/tu

"total 25M"

"-rw-rw-r-- 1 cmackenzie cmackenzie 7.7M Apr 28 09:15 str"

"-rw-rw-r-- 1 cmackenzie cmackenzie 9.6M Apr 28 09:15 str#"

"-rw-rw-r-- 1 cmackenzie cmackenzie 7.7M Apr 28 09:15 syms" The original str object is made into two files: str#, which contains the data as essentially one continuous string, and str, which is used to construct the breakpoints separating strings. syms‘ storage requirements (alongside the sym file containing the enumeration domain; see the following paragraph for details) are matched by str alone and exceeded twice over when bringing str# into the equation. Say instead some sort of compression e.g. kdb-ipc is used for saving down the table:

q)(`:hdb/tc/;16;1;0) set .Q.en[`:hdb;t];

q)\ls -lh hdb/tc

"-rw-rw-r-- 1 cmackenzie cmackenzie 3.2M Apr 28 09:40 str"

"-rw-rw-r-- 1 cmackenzie cmackenzie 9.6M Apr 28 09:40 str#"

"-rw-rw-r-- 1 cmackenzie cmackenzie 3.1M Apr 28 09:40 syms" str and syms achieve similar compression ratios whereas str# is unaffected. In this example the symbol type uses less than a quarter of the disk space that the char type does. This difference in outcomes is down to the fact that the column with symbol datatype has undergone enumeration i.e. a list comprised of the distinct values of syms (and any other subsequent symbol columns added on to tc) has been made as sym (mentioned previously) and the file named syms now contains in place of the original values their corresponding positional index within sym. The index list requires eight bytes of diskspace per item and the sym file however much it takes to store the unique symbols that are to be saved down. On the whole, for columns with not very many distinct values this approach is more storage efficient than saving down the original symbol column.

Because they can be enumerated, symbols should be used with data that has high levels of repetition. Conversely, the char type comes into it’s own in a situation such as there being a column comprised entirely of unique values (e.g. one-off transaction IDs). Repeating the example from before but now starting off with all 1,000,000 random draws being distinct from one another makes this clear :

q)syms:-1000000?`8 Saving down the data like before:

q)\ls -lh hdb

"total 8.6M"

"-rw-rw-r-- 1 cmackenzie cmackenzie 8.6M Apr 28 09:28 sym"

"drwxrwxr-x 2 cmackenzie cmackenzie 4.0K Apr 28 09:28 tu"

q)\ls -lh hdb/tu

"total 25M"

"-rw-rw-r-- 1 cmackenzie cmackenzie 7.7M Apr 28 09:28 str"

"-rw-rw-r-- 1 cmackenzie cmackenzie 9.6M Apr 28 09:28 str#"

"-rw-rw-r-- 1 cmackenzie cmackenzie 7.7M Apr 28 09:28 syms" Going with symbols in a case such as this is especially detrimental due to such a large sym list being needed. With on-disk databases this leads to “sym file bloat” – where columns with few repetitions (such as orderID columns in finance) are stored as symbols rather than strings. Queries are hampered by having to search through such a large structure which can drastically increase lookup times.

Encoding

With respect to symbols being inappropriate for columns made up of non-repeating values there is another approach that improves upon using char vectors. This approach offers both high-performance querying and undemanding storage requirements. Generally, some sort of integer encoding which reduces the amount of storage needed can be applied to data for saving down and the same encoding applied once again to values being looked-up.

(Provide) ASCII and You Shall Receive (Int)

q provides a set of (composite) functions for performing binary-to-text encoding/decoding. These are: .Q.j10 (and it’s inverse .Q.x10) which expects as input a char vector of max length ten and only containing characters from the alphanumerics, “+”, and “/”; and .Q.j12, (inverse .Q.x12) which can work on char vectors of up to length 12 but restricted to a a universe of (0,1,2,…,9,A,…,Z,a,..,z). The result of the .Q.j encoding in either case is an eight-byte long integer.

ISIN codes are the perfect candidates for .Q.j12 encoding. Because storing down char directly requires a list of eight-byte integers to represent the length of each string anyway, the difference in disk usage between the methods is however much storage is needed by the original char list. For example, with a column of 10 000 000 ISIN codes (without exchange extension):

q)\ls -lh hdb/t

"total 287M"

"-rw-rw-r-- 1 cmackenzie cmackenzie 77M Apr 28 09:42 encoded"

"-rw-rw-r-- 1 cmackenzie cmackenzie 77M Apr 28 09:42 isin"

"-rw-rw-r-- 1 cmackenzie cmackenzie 134M Apr 28 09:42 isin#"

Setting a GUID Example

Alternatively, arbitrary char vectors of length 16 or less can be encoded one-to-one into the GUID datatype with:

q)enc:{0x0 sv `byte$-16$x} First, the char vector x is left padded with however much whitespace is needed to make it up to 16 characters; the byte casting returns the two digit hexadecimal code of each character in this 16-byte string; sv with 0x0 as it’s first argument in this context converts the 32-digit hex number into the GUID datatype which puts it in the form 8-4-4-4-12, e.g. :

q)enc"a char vector"

20202061-2063-6861-7220-766563746f72enc takes a vector as input but returns an atom meaning attributes can be applied and the associated optimisations can be realised. The inverse function for returning readable results after performing a lookup is:

q)dec:{trim `char$0x0 vs x}The storage implications of this approach are seen by looking at another example where the amount of distinct values is large enough to be problematic for the symbol datatype. Here str is a list of 50 000 000 char vectors taken from a pool of 5004 distinct 12-byte strings and guids is their equivalent after applying enc. Looking at the numbers:

q)\ls -lh guidexample

"total 1.8G"

"-rw-rw-r-- 1 cmackenzie cmackenzie 763M Apr 28 10:47 guids"

"-rw-rw-r-- 1 cmackenzie cmackenzie 382M Apr 28 10:47 str"

"-rw-rw-r-- 1 cmackenzie cmackenzie 668M Apr 28 10:47 str#"In this uncompressed state the difference is sizeable; the encoded form requires only 70% the diskspace in comparison to the char form. When querying there is a noticeable difference as well:

q)1?str

"ynY7nORXfCip"

q)\ts select str from t where str like "ynY7nORXfCip"

427 67240528

q)\ts select .Q.fu[dec each;guids] from t where guids = enc "ynY7nORXfCip"

113 67240880

q)\ts select count i by str from t

4468 3221226032

q)\ts update .Q.fu[dec each;guids] from select count i by guids from t

2821 3221226576

The GUID datatype performs much better for lookups, on par with symbols.

Putting it all Together

Q.j10/12 on their own can only be used with strings as long as 10/12 characters chosen from a specific subset of the character universe:

q).Q.x10 .Q.j10 "string21characterlong"

"racterlong"

q).Q.x12 .Q.j12 "ASTRINGWITH$"

"ASTRINGWITI0"

Longer strings can be encoded if code with similar functionality to .Q.j10 etc. is used alongside the GUID datatype. Specifically, if input is limited to a subset of ASCII with size elements, then the maximum length supported is:

floor size xlog (0W-1) xexp 264 and 36 substituted in place of size for .Q.j10 and .Q.j12 respectively gives theoretical limits of 21 and 24. The 21 character limit is achieved by using something similar to the following:

universe:" .ABCDEFGHIJKLMNOPQRSTUVWXYZabcdefghijklmnopqrstuvwxyz0123456789";

enc:{

b:{-6#0b vs x}'[universe ? -21$x];

seq:8 cut (2#0b),raze b;

0x0 sv `byte$2 sv' seq};

dec:{

seq:{-8#0b vs x}'[`long$0x0 vs x];

b:6 cut 2_raze seq;

ltrim universe @ 2 sv' b};Whitespace rather than “A” in the zeroth index of the universe is more natural to some extent; under .Q.j10 there are results such as:

q)(.Q.j10 "Asymmetric") ~ .Q.j10 "symmetric"

1b

q).Q.x10 .Q.j10 "hchoo"

"AAAAAhchoo"Line-by-line enc is doing the following:

- Left-padding it’s input to be 21 characters; returning the position of each of these characters within

universeas a number in the range 0-63; expressing each of these numbers as a six-digit binary value. - Joining these to make a 128-bit value and then dividing this into eight octets.

- Representing each of these octets as two-digit hex numbers and formatting these altogether into a GUID.

dec performs the same set of operations but inverted. On large scales this technique offers a forty-percent reduction in storage for holding the same information. Once again the char datatype is far outclassed in terms of lookup performance. Here g and l both have 1,000 items:

q)\t:10 select dec each guids from guidb2texample where guids in g

692

q)\t:10 select strs from guidb2texample where any strs like/: l

73620

The 24-character limit is achieved slightly differently. Due it being an even number it can be done by effectively carrying out a .Q.j12 encoding on each half of the string and piecing these together into a GUID:

q)enc:{0x0 sv raze {0x0 vs' 36 sv .Q.nA?x} each 12 cut #[24 - count x;"0"],x}

q)dec:{@[.Q.nA] raze {#[12 - count l;0],l:36 vs 0x0 sv x} each 8 cut 0x0 vs x}The limitation of this encoding is that strings which are otherwise the same but differ in the number of leading zeros are encoded to the same GUID:

q)(enc "00000220429") ~ enc "220429"

1b

q)dec enc "AB"

"0000000000000000000000AB"Making a Hash of Things

Hashing is yet another means of representing large amounts of data in the form of 16-byte GUIDS. q has built-in as a keyword md5 which despite it’s unsuitability for secure cryptography is useful for storage-reduction purposes. The result of md5 is a hexadecimal byte stream vector which can be formatted into a GUID atom like was seen with the previous approach. md5 is different though as it’s input is limited to only what can practically be held in memory. md5 is essentially one-way thus the column it is being applied to cannot be readily converted back into a human-readable format after querying. Instead, the column should be considered as a key that is grouped with more interesting information in other columns. If the column needs to be human-readable then the .Q.j/GUID approach should be used, assuming the strings are in a form that allows for it.

Take as an example, simulating AquaQ’s work with a client, a system receiving from upstream an ID field made up of strings 50 characters long. In the use case in question, we didn’t care about the actual ID itself, just that values with the same ID maintained their relationship:

q)ids:50 cut (5000*50)?.Q.an

q)n:50000000

q)t:([]s:n?ids)

q)hashguid:{0x0 sv md5 x}

q)update encoded:.Q.fu[hashguid each;s] from `t

q)`:md5example/ set tHaving a look like before demonstrates the impact the hash has on storage:

q)\ls -lh md5example

"total 3.6G"

"-rw-rw-r-- 1 cmackenzie cmackenzie 763M Apr 28 16:07 encoded"

"-rw-rw-r-- 1 cmackenzie cmackenzie 382M Apr 28 16:07 s"

"-rw-rw-r-- 1 cmackenzie cmackenzie 2.5G Apr 28 16:07 s#"

q)%[763;282+2500]

0.2742631

The difference is more than substantial. Were it the case that the strings coming from upstream were even longer then the storage needed by s would scale in proportion whereas the diskspace occupied by the encoded column would remain constant (holding the number of records as fixed). From the perspective of a user doing a lookup on a single ID:

q)\t select from md5example where encoded = hashguid "bv_6smjV5zhxkQTvh3dWzd0peN7GeZ0WZZmVGLP8osnIS_mmRm",i = last i

7474

q)\t select from md5example where s like "bv_6smjV5zhxkQTvh3dWzd0peN7GeZ0WZZmVGLP8osnIS_mmRm",i = last i

28359Similarly for a more computationally-costly query (running q now on a more performant system in the interest of time):

q)\t select count i by encoded from t

3559

q)\t select count i by s from t

9126On multiple fronts the facility of md5 hashing offers a great design choice in the context of handling text data .

Summary

This post collects together a number of different methods of storing text data, along with their associated pros and cons. Storing data as strings in kdb+ is straightforward but depending on the use case there may be better alternatives with respect to both storage space and query performance.

If you would like assistance with any form of kdb+ optimisation, please reach out.

Share this: